Ad

ai7 (1) Artificial Neural Network Intro .ppt

- 2. Artificial Neural Networks Computational models inspired by the human brain: Algorithms that try to mimic the brain. Massively parallel, distributed system, made up of simple processing units (neurons) Synaptic connection strengths among neurons are used to store the acquired knowledge. Knowledge is acquired by the network from its environment through a learning process

- 3. History late-1800's - Neural Networks appear as an analogy to biological systems 1960's and 70's – Simple neural networks appear Fall out of favor because the perceptron is not effective by itself, and there were no good algorithms for multilayer nets 1986 – Backpropagation algorithm appears Neural Networks have a resurgence in popularity More computationally expensive

- 4. Applications of ANNs ANNs have been widely used in various domains for: Pattern recognition Function approximation Associative memory

- 5. Properties Inputs are flexible any real values Highly correlated or independent Target function may be discrete-valued, real-valued, or vectors of discrete or real values Outputs are real numbers between 0 and 1 Resistant to errors in the training data Long training time Fast evaluation The function produced can be difficult for humans to interpret

- 6. When to consider neural networks Input is high-dimensional discrete or raw-valued Output is discrete or real-valued Output is a vector of values Possibly noisy data Form of target function is unknown Human readability of the result is not important Examples: Speech phoneme recognition Image classification Financial prediction

- 7. April 17, 2025 Data Mining: Concepts and Techniques 7 A Neuron (= a perceptron) The n-dimensional input vector x is mapped into variable y by means of the scalar product and a nonlinear function mapping t - f weighted sum Input vector x output y Activation function weight vector w w0 w1 wn x0 x1 xn ) sign( y e For Exampl n 0 i t x w i i

- 8. Perceptron Basic unit in a neural network Linear separator Parts N inputs, x1 ... xn Weights for each input, w1 ... wn A bias input x0 (constant) and associated weight w0 Weighted sum of inputs, y = w0x0 + w1x1 + ... + wnxn A threshold function or activation function, i.e 1 if y > t, -1 if y <= t

- 9. Artificial Neural Networks (ANN) Model is an assembly of inter-connected nodes and weighted links Output node sums up each of its input value according to the weights of its links Compare output node against some threshold t X1 X2 X3 Y Black box w1 t Output node Input nodes w2 w3 ) ( t x w I Y i i i Perceptron Model ) ( t x w sign Y i i i or

- 10. Types of connectivity Feedforward networks These compute a series of transformations Typically, the first layer is the input and the last layer is the output. Recurrent networks These have directed cycles in their connection graph. They can have complicated dynamics. More biologically realistic. hidden units output units input units

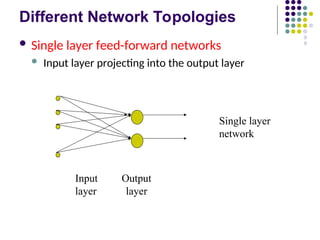

- 11. Different Network Topologies Single layer feed-forward networks Input layer projecting into the output layer Input Output layer layer Single layer network

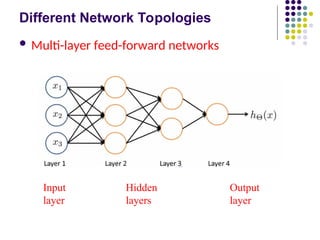

- 12. Different Network Topologies Multi-layer feed-forward networks One or more hidden layers. Input projects only from previous layers onto a layer. Input Hidden Output layer layer layer 2-layer or 1-hidden layer fully connected network

- 13. Different Network Topologies Multi-layer feed-forward networks Input Hidden Output layer layers layer

- 14. Different Network Topologies Recurrent networks A network with feedback, where some of its inputs are connected to some of its outputs (discrete time). Input Output layer layer Recurrent network

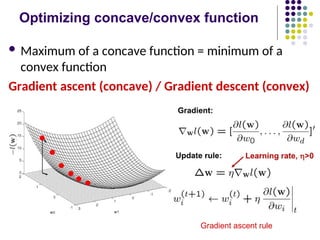

- 15. Algorithm for learning ANN Initialize the weights (w0, w1, …, wk) Adjust the weights in such a way that the output of ANN is consistent with class labels of training examples Error function: Find the weights wi’s that minimize the above error function e.g., gradient descent, backpropagation algorithm 2 ) , ( i i i i X w f Y E

- 16. Optimizing concave/convex function Maximum of a concave function = minimum of a convex function Gradient ascent (concave) / Gradient descent (convex) Gradient ascent rule

- 24. Decision surface of a perceptron Decision surface is a hyperplane Can capture linearly separable classes Non-linearly separable Use a network of them

- 27. Multi-layer Networks Linear units inappropriate No more expressive than a single layer „ Introduce non-linearity Threshold not differentiable „ Use sigmoid function

- 31. April 17, 2025 Data Mining: Concepts and Techniques 31 Backpropagation Iteratively process a set of training tuples & compare the network's prediction with the actual known target value For each training tuple, the weights are modified to minimize the mean squared error between the network's prediction and the actual target value Modifications are made in the “backwards” direction: from the output layer, through each hidden layer down to the first hidden layer, hence “backpropagation” Steps Initialize weights (to small random #s) and biases in the network Propagate the inputs forward (by applying activation function) Backpropagate the error (by updating weights and biases) Terminating condition (when error is very small, etc.)

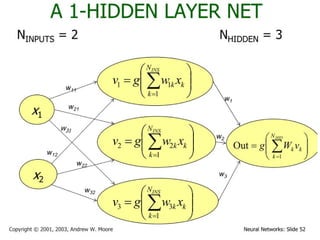

- 33. April 17, 2025 Data Mining: Concepts and Techniques 33 How A Multi-Layer Neural Network Works? The inputs to the network correspond to the attributes measured for each training tuple Inputs are fed simultaneously into the units making up the input layer They are then weighted and fed simultaneously to a hidden layer The number of hidden layers is arbitrary, although usually only one The weighted outputs of the last hidden layer are input to units making up the output layer, which emits the network's prediction The network is feed-forward in that none of the weights cycles back to an input unit or to an output unit of a previous layer From a statistical point of view, networks perform nonlinear regression: Given enough hidden units and enough training samples, they can closely approximate any function

- 34. April 17, 2025 Data Mining: Concepts and Techniques 34 Defining a Network Topology First decide the network topology: # of units in the input layer, # of hidden layers (if > 1), # of units in each hidden layer, and # of units in the output layer Normalizing the input values for each attribute measured in the training tuples to [0.0—1.0] One input unit per domain value, each initialized to 0 Output, if for classification and more than two classes, one output unit per class is used Once a network has been trained and its accuracy is unacceptable, repeat the training process with a different network topology or a different set of initial weights

- 35. April 17, 2025 Data Mining: Concepts and Techniques 35 Backpropagation and Interpretability Efficiency of backpropagation: Each epoch (one interation through the training set) takes O(|D| * w), with |D| tuples and w weights, but # of epochs can be exponential to n, the number of inputs, in the worst case Rule extraction from networks: network pruning Simplify the network structure by removing weighted links that have the least effect on the trained network Then perform link, unit, or activation value clustering The set of input and activation values are studied to derive rules describing the relationship between the input and hidden unit layers Sensitivity analysis: assess the impact that a given input variable has on a network output. The knowledge gained from this analysis can be represented in rules

- 36. April 17, 2025 Data Mining: Concepts and Techniques 36 Neural Network as a Classifier Weakness Long training time Require a number of parameters typically best determined empirically, e.g., the network topology or “structure.” Poor interpretability: Difficult to interpret the symbolic meaning behind the learned weights and of “hidden units” in the network Strength High tolerance to noisy data Ability to classify untrained patterns Well-suited for continuous-valued inputs and outputs Successful on a wide array of real-world data Algorithms are inherently parallel Techniques have recently been developed for the extraction of rules from trained neural networks

- 38. Artificial Neural Networks (ANN) X1 X2 X3 Y 1 0 0 0 1 0 1 1 1 1 0 1 1 1 1 1 0 0 1 0 0 1 0 0 0 1 1 1 0 0 0 0 X1 X2 X3 Y Black box 0.3 0.3 0.3 t=0.4 Output node Input nodes otherwise 0 true is if 1 ) ( where ) 0 4 . 0 3 . 0 3 . 0 3 . 0 ( 3 2 1 z z I X X X I Y

- 40. April 17, 2025 Data Mining: Concepts and Techniques 40 A Multi-Layer Feed-Forward Neural Network Output layer Input layer Hidden layer Output vector Input vector: X wij ij k i i k j k j x y y w w ) ˆ ( ) ( ) ( ) 1 (

- 41. General Structure of ANN Activation function g(Si ) Si Oi I1 I2 I3 wi1 wi2 wi3 Oi Neuron i Input Output threshold, t Input Layer Hidden Layer Output Layer x1 x2 x3 x4 x5 y Training ANN means learning the weights of the neurons

![April 17, 2025

Data Mining: Concepts and

Techniques 34

Defining a Network Topology

First decide the network topology: # of units in the input

layer, # of hidden layers (if > 1), # of units in each hidden

layer, and # of units in the output layer

Normalizing the input values for each attribute measured in

the training tuples to [0.0—1.0]

One input unit per domain value, each initialized to 0

Output, if for classification and more than two classes, one

output unit per class is used

Once a network has been trained and its accuracy is

unacceptable, repeat the training process with a different

network topology or a different set of initial weights](https://image.slidesharecdn.com/ai71-250417062405-ec459d24/85/ai7-1-Artificial-Neural-Network-Intro-ppt-34-320.jpg)