Deep Learning and TensorFlow

- 1. Introduction to Deep Learning BayPiggies Meetup 08/23/2018 LinkedIn Sunnyvale Unify Meeting Room Oswald Campesato [email protected]

- 2. Highlights/Overview intro to AI/ML/DL/NNs Hidden layers Initialization values Neurons per layer Activation function cost function gradient descent learning rate Dropout rate what are CNNs

- 4. Use Cases for Deep Learning computer vision speech recognition image processing bioinformatics social network filtering drug design Recommendation systems Bioinformatics Mobile Advertising Many others

- 5. NN 3 Hidden Layers: Classifier

- 6. NN: 2 Hidden Layers (Regression)

- 7. Classification and Deep Learning

- 8. A Basic Model in Machine Learning Let’s perform the following steps: 1) Start with a simple model (2 variables) 2) Generalize that model (n variables) 3) See how it might apply to a NN

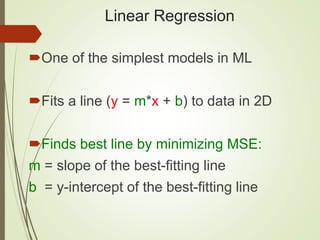

- 9. Linear Regression One of the simplest models in ML Fits a line (y = m*x + b) to data in 2D Finds best line by minimizing MSE: m = slope of the best-fitting line b = y-intercept of the best-fitting line

- 10. Linear Regression in 2D: example

- 11. Linear Regression in 2D: example

- 12. Sample Cost Function #1 (MSE)

- 13. Linear Regression: example #1 One feature (independent variable): X = number of square feet Predicted value (dependent variable): Y = cost of a house A very “coarse grained” model We can devise a much better model

- 14. Linear Regression: example #2 Multiple features: X1 = # of square feet X2 = # of bedrooms X3 = # of bathrooms (dependency?) X4 = age of house X5 = cost of nearby houses X6 = corner lot (or not): Boolean a much better model (6 features)

- 15. Linear Multivariate Analysis General form of multivariate equation: Y = w1*x1 + w2*x2 + . . . + wn*xn + b w1, w2, . . . , wn are numeric values x1, x2, . . . , xn are variables (features) Properties of variables: Can be independent (Naïve Bayes) weak/strong dependencies can exist

- 16. NN with 3 Hidden Layers (again)

- 17. Neural Networks: equations Node “values” in first hidden layer: N1 = w11*x1+w21*x2+…+wn1*xn N2 = w12*x1+w22*x2+…+wn2*xn N3 = w13*x1+w23*x2+…+wn3*xn . . . Nn = w1n*x1+w2n*x2+…+wnn*xn Similar equations for other pairs of layers

- 18. Neural Networks: Matrices From inputs to first hidden layer: Y1 = W1*X + B1 (X/Y1/B1: vectors; W1: matrix) From first to second hidden layers: Y2 = W2*X + B2 (X/Y2/B2: vectors; W2: matrix) From second to third hidden layers: Y3 = W3*X + B3 (X/Y3/B3: vectors; W3: matrix) Apply an “activation function” to y values

- 19. Neural Networks (general) Multiple hidden layers: Layer composition is your decision Activation functions: sigmoid, tanh, RELU https://en.wikipedia.org/wiki/Activation_function Back propagation (1980s) https://en.wikipedia.org/wiki/Backpropagation => Initial weights: small random numbers

- 20. Euler’s Function (e: 2.71828. . .)

- 21. The sigmoid Activation Function

- 22. The tanh Activation Function

- 23. The ReLU Activation Function

- 24. The softmax Activation Function

- 25. Activation Functions in Python import numpy as np ... # Python sigmoid example: z = 1/(1 + np.exp(-np.dot(W, x))) ... # Python tanh example: z = np.tanh(np.dot(W,x)); # Python ReLU example: z = np.maximum(0, np.dot(W, x))

- 26. What’s the “Best” Activation Function? Initially: sigmoid was popular Then: tanh became popular Now: RELU is preferred (better results) Softmax: for FC (fully connected) layers NB: sigmoid and tanh are used in LSTMs

- 27. Types of Cost/Error Functions MSE (mean-squared error) Cross-entropy exponential others

- 28. Sample Cost Function #1 (MSE)

- 29. Sample Cost Function #2

- 30. Sample Cost Function #3

- 31. How to Select a Cost Function mean-squared error: for a regression problem binary cross-entropy (or mse): for a two-class classification problem categorical cross-entropy: for a many-class classification problem

- 33. GD versus SGD SGD (Stochastic Gradient Descent): + one row of data Minibatch: involves a SUBSET of the dataset + aka Minibatch Stochastic Gradient Descent GD (Gradient Descent): + involves the ENTIRE dataset More details: http://cs229.stanford.edu/notes/cs229-notes1.pdf

- 34. Setting up Data & the Model standardize the data: Subtract the ‘mean’ and divide by stddev Initial weight values for NNs: random(0,1) or N(0,1) or N(0/(1/n)) More details: http://cs231n.github.io/neural-networks-2/#losses

- 35. Deep Neural Network: summary input layer, multiple hidden layers, and output layer nonlinear processing via activation functions perform transformation and feature extraction gradient descent algorithm with back propagation each layer receives the output from previous layer results are comparable/superior to human experts

- 36. Types of Deep Learning Supervised learning (you know the answer) unsupervised learning (you don’t know the answer) Semi-supervised learning (mixed dataset) Reinforcement learning (such as games) Types of algorithms: Classifiers (detect images, spam, fraud, etc) Regression (predict stock price, housing price, etc) Clustering (unsupervised classifiers)

- 37. CNNs versus RNNs CNNs (Convolutional NNs): Good for image processing 2000: CNNs processed 10-20% of all checks => Approximately 60% of all NNs RNNs (Recurrent NNs): Good for NLP and audio Used in hybrid networks

- 38. CNNs: Convolution, ReLU, and Max Pooling

- 40. CNNs: Convolution Matrices (examples) Sharpen: Blur:

- 41. CNNs: Convolution Matrices (examples) Edge detect: Emboss:

- 42. CNNs: Max Pooling Example

- 43. GANs: Generative Adversarial Networks

- 44. GANs: Generative Adversarial Networks Make imperceptible changes to images Can consistently defeat all NNs Can have extremely high error rate Some images create optical illusions https://www.quora.com/What-are-the-pros-and-cons- of-using-generative-adversarial-networks-a-type-of- neural-network

- 45. GANs: Generative Adversarial Networks Create your own GANs: https://www.oreilly.com/learning/generative-adversarial-networks-for- beginners https://github.com/jonbruner/generative-adversarial-networks GANs from MNIST: http://edwardlib.org/tutorials/gan GANs and Capsule networks?

- 46. CNN in Python/Keras (fragment) from keras.models import Sequential from keras.layers.core import Dense, Dropout, Activation from keras.layers.convolutional import Conv2D, MaxPooling2D from keras.optimizers import Adadelta input_shape = (3, 32, 32) nb_classes = 10 model = Sequential() model.add(Conv2D(32,(3, 3),padding='same’, input_shape=input_shape)) model.add(Activation('relu')) model.add(Conv2D(32, (3, 3))) model.add(Activation('relu')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Dropout(0.25))

- 47. What is TensorFlow? An open source framework for ML and DL A “computation” graph Created by Google (released 11/2015) Evolved from Google Brain Linux and Mac OS X support (VM for Windows) TF home page: https://www.tensorflow.org/

- 48. What is TensorFlow? Support for Python, Java, C++ Desktop, server, mobile device (TensorFlow Lite) CPU/GPU/TPU support Visualization via TensorBoard Can be embedded in Python scripts Installation: pip install tensorflow TensorFlow cluster: https://www.tensorflow.org/deploy/distributed

- 49. TensorFlow Use Cases (Generic) Image recognition Computer vision Voice/sound recognition Time series analysis Language detection Language translation Text-based processing Handwriting Recognition

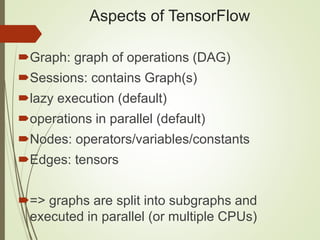

- 50. Aspects of TensorFlow Graph: graph of operations (DAG) Sessions: contains Graph(s) lazy execution (default) operations in parallel (default) Nodes: operators/variables/constants Edges: tensors => graphs are split into subgraphs and executed in parallel (or multiple CPUs)

- 51. TensorFlow Graph Execution Execute statements in a tf.Session() object Invoke the “run” method of that object “eager” execution is available (>= v1.4) included in the mainline (v1.7) Installation: pip install tensorflow

- 52. What is a Tensor? TF tensors are n-dimensional arrays TF tensors are very similar to numpy ndarrays scalar number: a zeroth-order tensor vector: a first-order tensor matrix: a second-order tensor 3-dimensional array: a 3rd order tensor https://dzone.com/articles/tensorflow-simplified- examples

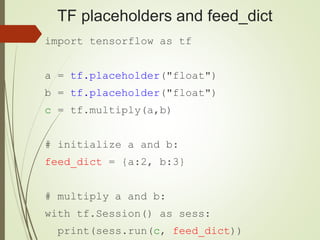

- 53. TensorFlow “primitive types” tf.constant: + initialized immediately + immutable tf.placeholder (a function): + initial value is not required + can have variable shape + assigned value via feed_dict at run time + receive data from “external” sources

- 54. TensorFlow “primitive types” tf.Variable (a class): + initial value is required + updated during training + maintain state across calls to “run()” + in-memory buffer (saved/restored from disk) + can be shared in a distributed environment + they hold learned parameters of a model

- 55. TensorFlow: constants (immutable) import tensorflow as tf aconst = tf.constant(3.0) print(aconst) # output: Tensor("Const:0", shape=(), dtype=float32) sess = tf.Session() print(sess.run(aconst)) # output: 3.0 sess.close() # => there's a better way

- 56. TensorFlow: constants import tensorflow as tf aconst = tf.constant(3.0) print(aconst) Automatically close “sess” with tf.Session() as sess: print(sess.run(aconst))

- 57. TensorFlow Arithmetic import tensorflow as tf a = tf.add(4, 2) b = tf.subtract(8, 6) c = tf.multiply(a, 3) d = tf.div(a, 6) with tf.Session() as sess: print(sess.run(a)) # 6 print(sess.run(b)) # 2 print(sess.run(c)) # 18 print(sess.run(d)) # 1

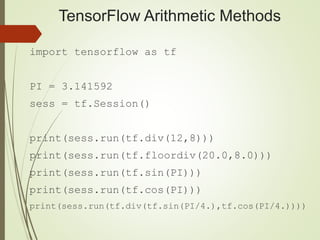

- 58. TensorFlow Arithmetic Methods import tensorflow as tf PI = 3.141592 sess = tf.Session() print(sess.run(tf.div(12,8))) print(sess.run(tf.floordiv(20.0,8.0))) print(sess.run(tf.sin(PI))) print(sess.run(tf.cos(PI))) print(sess.run(tf.div(tf.sin(PI/4.),tf.cos(PI/4.))))

- 59. TensorFlow Arithmetic Methods Output from previous slide: 1 2.0 6.27833e-07 -1.0 1.0

- 60. TF placeholders and feed_dict import tensorflow as tf a = tf.placeholder("float") b = tf.placeholder("float") c = tf.multiply(a,b) # initialize a and b: feed_dict = {a:2, b:3} # multiply a and b: with tf.Session() as sess: print(sess.run(c, feed_dict))

- 61. TensorFlow: Simple Equation import tensorflow as tf # W and x are 1d arrays W = tf.constant([10,20], name='W') X = tf.placeholder(tf.int32, name='x') b = tf.placeholder(tf.int32, name='b') Wx = tf.multiply(W, x, name='Wx') y = tf.add(Wx, b, name='y') OR y2 = tf.add(tf.multiply(W,x),b)

- 62. TensorFlow fetch/feed_dict with tf.Session() as sess: print("Result 1: Wx = ", sess.run(Wx, feed_dict={x:[5,10]})) print("Result 2: y = ", sess.run(y,feed_dict={x:[5,10],b:[15,25]})) Result 1: Wx = [50 200] Result 2: y = [65 225]

- 63. TensorFlow: Linear Regression import tensorflow as tf import numpy rng = numpy.random # tf Graph Input X = tf.placeholder("float") Y = tf.placeholder("float") # Set model weights W = tf.Variable(rng.randn(), name="weight") b = tf.Variable(rng.randn(), name="bias") # Construct a linear model (pred = predicted value): pred = tf.add(tf.mul(X, W), b)

- 64. Saving Graphs for TensorBoard import tensorflow as tf x = tf.constant(5,name="x") y = tf.constant(8,name="y") z = tf.Variable(2*x+3*y, name="z") init = tf.global_variables_initializer() with tf.Session() as session: writer = tf.summary.FileWriter("./tf_logs",session.graph) session.run(init) print 'z = ',session.run(z) # => z = 34 # launch: tensorboard –logdir=./tf_logs

- 65. TensorFlow Eager Execution An imperative interface to TF Fast debugging & immediate run-time errors Eager execution is “mainline” in v1.7 of TF => requires Python 3.x (not Python 2.x)

- 66. TensorFlow Eager Execution integration with Python tools Supports dynamic models + Python control flow support for custom and higher-order gradients Supports most TensorFlow operations https://research.googleblog.com/2017/10/eager- execution-imperative-define-by.html

- 67. TensorFlow Eager Execution import tensorflow as tf import tensorflow.contrib.eager as tfe tfe.enable_eager_execution() x = [[2.]] m = tf.matmul(x, x) print(m) # tf.Tensor([[4.]], shape=(1, 1), dtype=float32)

- 68. Deep Learning and Art/”Stuff” “Convolutional Blending” images: => 19-layer Convolutional Neural Network www.deepart.io https://www.fastcodesign.com/90124942/this-google- engineer-taught-an-algorithm-to-make-train-footage- and-its-hypnotic

- 69. Some of my Books 1) HTML5 Canvas and CSS3 Graphics (2013) 2) jQuery, CSS3, and HTML5 for Mobile (2013) 3) HTML5 Pocket Primer (2013) 4) jQuery Pocket Primer (2013) 5) HTML5 Mobile Pocket Primer (2014) 6) D3 Pocket Primer (2015) 7) Python Pocket Primer (2015) 8) SVG Pocket Primer (2016) 9) CSS3 Pocket Primer (2016) 10) Android Pocket Primer (2017) 11) Angular Pocket Primer (2017) 12) Data Cleaning Pocket Primer (2018) 13) RegEx Pocket Primer (2018)

- 70. What I do (Training) => Instructor at UCSC: Deep Learning with TensorFlow (10/2018 & 02/2019) Machine Learning Introduction (01/17/2019) => Mobile and TensorFlow Lite (WIP) => R and Deep Learning (WIP) => Android for Beginners (multi-day workshops)

![TensorFlow: Simple Equation

import tensorflow as tf

# W and x are 1d arrays

W = tf.constant([10,20], name='W')

X = tf.placeholder(tf.int32, name='x')

b = tf.placeholder(tf.int32, name='b')

Wx = tf.multiply(W, x, name='Wx')

y = tf.add(Wx, b, name='y') OR

y2 = tf.add(tf.multiply(W,x),b)](https://image.slidesharecdn.com/baypython-dltf-180824050421/85/Deep-Learning-and-TensorFlow-61-320.jpg)

![TensorFlow fetch/feed_dict

with tf.Session() as sess:

print("Result 1: Wx = ",

sess.run(Wx, feed_dict={x:[5,10]}))

print("Result 2: y = ",

sess.run(y,feed_dict={x:[5,10],b:[15,25]}))

Result 1: Wx = [50 200]

Result 2: y = [65 225]](https://image.slidesharecdn.com/baypython-dltf-180824050421/85/Deep-Learning-and-TensorFlow-62-320.jpg)

![TensorFlow Eager Execution

import tensorflow as tf

import tensorflow.contrib.eager as tfe

tfe.enable_eager_execution()

x = [[2.]]

m = tf.matmul(x, x)

print(m)

# tf.Tensor([[4.]], shape=(1, 1), dtype=float32)](https://image.slidesharecdn.com/baypython-dltf-180824050421/85/Deep-Learning-and-TensorFlow-67-320.jpg)