Lambda Architecture with Spark, Spark Streaming, Kafka, Cassandra, Akka and Scala

- 1. ©2014 DataStax Confidential. Do not distribute without consent. @helenaedelson Helena Edelson Lambda Architecture with Spark Streaming, Kafka, Cassandra, Akka, Scala 1

- 2. • Spark Cassandra Connector committer • Akka contributor (Akka Cluster) • Scala & Big Data conference speaker • Sr Software Engineer, Analytics @ DataStax • Sr Cloud Engineer, VMware,CrowdStrike,SpringSource… • (Prev) Spring committer - Spring AMQP, Spring Integration Analytic Who Is This Person?

- 3. Analytic Analytic Search Use Case: Hadoop + Scalding /** Reads SequenceFile data from S3 buckets, computes then persists to Cassandra. */ class TopSearches(args: Args) extends TopKDailyJob[MyDataType](args) with Cassandra { PailSource.source[Search](rootpath, structure, directories).read .mapTo('pailItem -> 'engines) { e: Search ⇒ results(e) } .filter('engines) { e: String ⇒ e.nonEmpty } .groupBy('engines) { _.size('count).sortBy('engines) } .groupBy('engines) { _.sortedReverseTake[(String, Long)](('engines, 'count) -> 'tcount, k) } .flatMapTo('tcount -> ('key, 'engine, 'topCount)) { t: List[(String, Long)] ⇒ t map { case (k, v) ⇒ (jobKey, k, v) }} .write(CassandraSource(connection, "top_searches", Scheme(‘key, ('engine, ‘topCount)))) }

- 5. Talk Roadmap What Delivering Meaning Why Spark, Kafka, Cassandra & Akka How Composable Pipelines App Robust Implementation

- 6. ©2014 DataStax Confidential. Do not distribute without consent. Sending Data Between Systems Is Difficult Risky 6

- 8. Strategies • Scalable Infrastructure • Partition For Scale • Replicate For Resiliency • Share Nothing • Asynchronous Message Passing • Parallelism • Isolation • Location Transparency

- 9. Strategy Technologies Scalable Infrastructure / Elastic scale on demand Spark, Cassandra, Kafka Partition For Scale, Network Topology Aware Cassandra, Spark, Kafka, Akka Cluster Replicate For Resiliency span racks and datacenters, survive regional outages Spark,Cassandra, Akka Cluster all hash the node ring Share Nothing, Masterless Cassandra, Akka Cluster both Dynamo style Fault Tolerance / No Single Point of Failure Spark, Cassandra, Kafka Replay From Any Point Of Failure Spark, Cassandra, Kafka, Akka + Akka Persistence Failure Detection Cassandra, Spark, Akka, Kafka Consensus & Gossip Cassandra & Akka Cluster Parallelism Spark, Cassandra, Kafka, Akka Asynchronous Data Passing Kafka, Akka, Spark Fast, Low Latency, Data Locality Cassandra, Spark, Kafka Location Transparency Akka, Spark, Cassandra, Kafka

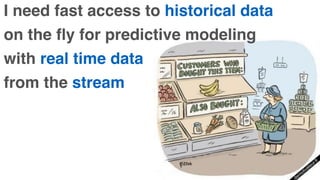

- 10. Lambda Architecture A data-processing architecture designed to handle massive quantities of data by taking advantage of both batch and stream processing methods. • Spark is one of the few data processing frameworks that allows you to seamlessly integrate batch and stream processing • Of petabytes of data • In the same application

- 11. I need fast access to historical data on the fly for predictive modeling with real time data from the stream

- 12. Analytic Analytic Search • Fast, distributed, scalable and fault tolerant cluster compute system • Enables Low-latency with complex analytics • Developed in 2009 at UC Berkeley AMPLab, open sourced in 2010, and became a top-level Apache project in February, 2014

- 13. • High Throughput Distributed Messaging • Decouples Data Pipelines • Handles Massive Data Load • Support Massive Number of Consumers • Distribution & partitioning across cluster nodes • Automatic recovery from broker failures

- 15. The one thing in your infrastructure you can always rely on.

- 16. •Massively Scalable • High Performance • Always On • Masterless

- 18. • Fault tolerant • Hierarchical Supervision • Customizable Failure Strategies & Detection • Asynchronous Data Passing • Parallelization - Balancing Pool Routers • Akka Cluster • Adaptive / Predictive • Load-Balanced Across Cluster Nodes

- 19. • Stream data from Kafka to Cassandra • Stream data from Kafka to Spark and write to Cassandra • Stream from Cassandra to Spark - coming soon! • Read data from Spark/Spark Streaming Source and write to C* • Read data from Cassandra to Spark

- 20. Your Code

- 22. Most Active OSS In Big Data Search

- 23. Apache Spark - Easy to Use API Returns the top (k) highest temps for any location in the year def topK(aggregate: Seq[Double]): Seq[Double] = sc.parallelize(aggregate).top(k).collect Returns the top (k) highest temps … in a Future def topK(aggregate: Seq[Double]): Future[Seq[Double]] = sc.parallelize(aggregate).top(k).collectAsync Analytic Analytic Search

- 24. © 2014 DataStax, All Rights Reserved Company Confidential Not Just MapReduce

- 25. Spark Basic Word Count val conf = new SparkConf() .setMaster(host).setAppName(app) val sc = new SparkContext(conf) sc.textFile(words) .flatMap(_.split("s+")) .map(word => (word.toLowerCase, 1)) .reduceByKey(_ + _) .collect Analytic Analytic Search

- 26. Analytic Analytic Search Collection To RDD scala> val data = Array(1, 2, 3, 4, 5) data: Array[Int] = Array(1, 2, 3, 4, 5) scala> val distributedData = sc.parallelize(data) distributedData: spark.RDD[Int] = spark.ParallelCollection@10d13e3e

- 28. When Batch Is Not Enough Analytic Analytic

- 29. Spark Streaming • I want results continuously in the event stream • I want to run computations in my even-driven async apps • Exactly once message guarantees

- 30. Spark Streaming Setup val conf = new SparkConf().setMaster(SparkMaster).setAppName(AppName) val ssc = new StreamingContext(conf, Milliseconds(500)) // Do work in the stream ssc.checkpoint(checkpointDir) ssc.start() ssc.awaitTermination

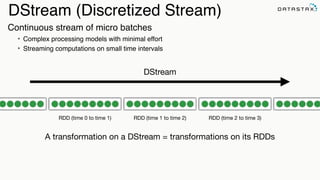

- 31. DStream (Discretized Stream) RDD (time 0 to time 1) RDD (time 1 to time 2) RDD (time 2 to time 3) A transformation on a DStream = transformations on its RDDs DStream Continuous stream of micro batches • Complex processing models with minimal effort • Streaming computations on small time intervals

- 32. Spark Streaming External Source/Sink

- 33. DStreams - the stream of raw data received from streaming sources: • Basic Source - in the StreamingContext API • Advanced Source - in external modules and separate Spark artifacts Receivers • Reliable Receivers - for data sources supporting acks (like Kafka) • Unreliable Receivers - for data sources not supporting acks 33 ReceiverInputDStreams

- 34. Basic Streaming: FileInputDStream // Creates new DStreams ssc.textFileStream("s3n://raw_data_bucket/") .flatMap(_.split("s+")) .map(_.toLowerCase, 1)) .countByValue() .saveAsObjectFile("s3n://analytics_bucket/") Search

- 35. Streaming Window Operations kvStream .flatMap { case (k,v) => (k,v.value) } .reduceByKeyAndWindow((a:Int,b:Int) => (a + b), Seconds(30), Seconds(10)) .saveToCassandra(keyspace,table) Window Length: Duration = every 10s Sliding Interval: Interval at which the window operation is performed = every 10 s

- 38. Apache Cassandra • Elasticity - scale to as many nodes as you need, when you need • Always On - No single point of failure, Continuous availability • Masterless peer to peer architecture • Replication Across DataCenters • Flexible Data Storage • Read and write to any node syncs across the cluster • Operational simplicity - all nodes in a cluster are the same • Fast Linear-Scale Performance •Transaction Support

- 40. Science Physics: Astro Physics / Particle Physics..

- 41. Genetics / Biological Computations

- 42. IoT

- 43. Europe US Cassandra • Availability Model • Amazon Dynamo • Distributed, Masterless • Data Model • Google Big Table • Network Topology Aware • Multi-Datacenter Replication

- 44. Analytics with Spark Over Cassandra Online Analytics Cassandra enables Spark nodes to transparently communicate across data centers for data

- 46. What Happens When Nodes Come Back? • Hinted handoff to the rescue • Coordinators keep writes for downed nodes for a configurable amount of time

- 47. Gossip Did you hear node 1 was down??

- 48. Consensus • Consensus, the agreement among peers on the value of a shared piece of data, is a core building block of Distributed systems • Cassandra supports consensus via the Paxos protocol

- 49. CREATE TABLE users ( username varchar, firstname varchar, lastname varchar, email list<varchar>, password varchar, created_date timestamp, PRIMARY KEY (username) ); INSERT INTO users (username, firstname, lastname, email, password, created_date) VALUES ('hedelson','Helena','Edelson', [‘[email protected]'],'ba27e03fd95e507daf2937c937d499ab','2014-11-15 13:50:00’) IF NOT EXISTS; • Familiar syntax • Many Tools & Drivers • Many Languages • Friendly to programmers • Paxos for locking CQL - Easy

- 50. CREATE TABLE weather.raw_data ( wsid text, year int, month int, day int, hour int, temperature double, dewpoint double, pressure double, wind_direction int, wind_speed double, one_hour_precip PRIMARY KEY ((wsid), year, month, day, hour) ) WITH CLUSTERING ORDER BY (year DESC, month DESC, day DESC, hour DESC); C* Clustering Columns Writes by most recent Reads return most recent first Timeseries Cassandra will automatically sort by most recent for both both write and read

- 51. val multipleStreams = (1 to numDstreams).map { i => streamingContext.receiverStream[HttpRequest](new HttpReceiver(port)) } streamingContext.union(multipleStreams) .map { httpRequest => TimelineRequestEvent(httpRequest)} .saveToCassandra("requests_ks", "timeline") A record of every event, in order in which it happened, per URL: CREATE TABLE IF NOT EXISTS requests_ks.timeline ( timesegment bigint, url text, t_uuid timeuuid, method text, headers map <text, text>, body text, PRIMARY KEY ((url, timesegment) , t_uuid) ); timeuuid protects from simultaneous events over-writing one another. timesegment protects from writing unbounded partitions.

- 52. ©2014 DataStax Confidential. Do not distribute without consent. Spark Cassandra Connector 52

- 53. Spark Cassandra Connector https://github.com/datastax/spark-cassandra-connector • Write data from Spark to Cassandra • Read data from Cassandra to Spark • Data Locality for Speed • Easy, and often implicit, type conversions • Server-Side Filtering - SELECT, WHERE, etc. • Natural Timeseries Integration • Implemented in Scala

- 54. Spark Cassandra Connector C* C* C*C* Spark Executor C* Driver Spark-Cassandra Connector User Application Cassandra

- 55. 55 Co-locate Spark and C* for Best Performance C* C*C* C* Spark Worker Spark Worker Spark Master Spark Worker Running Spark Workers on the same nodes as your C* Cluster saves network hops

- 56. Analytic Search Writing and Reading SparkContext import com.datastax.spark.connector._ StreamingContext import com.datastax.spark.connector.streaming._

- 57. Analytic Write from Spark to Cassandra sc.parallelize(collection).saveToCassandra("keyspace", "raw_data") SparkContext Keyspace Table Spark RDD JOIN with NOSQL! predictionsRdd.join(music).saveToCassandra("music", "predictions")

- 58. Read From C* to Spark val rdd = sc.cassandraTable("github", "commits") .select("user","count","year","month") .where("commits >= ? and year = ?", 1000, 2015) CassandraRDD[CassandraRow] Keyspace Table Server-Side Column and Row Filtering SparkContext

- 59. val rdd = ssc.cassandraTable[MonthlyCommits]("github", "commits_aggregate") .where("user = ? and project_name = ? and year = ?", "helena", "spark-‐cassandra-‐connector", 2015) CassandraRow Keyspace TableStreamingContext Rows: Custom Objects

- 60. Rows val tuplesRdd = sc.cassandraTable[(Int,Date,String)](db, tweetsTable) .select("cluster_id","time", "cluster_name") .where("time > ? and time < ?", "2014-‐07-‐12 20:00:01", "2014-‐07-‐12 20:00:03”) val keyValuesPairsRdd = sc.cassandraTable[(Key,Value)](keyspace, table)

- 61. Rows val rdd = ssc.cassandraTable[MyDataType]("stats", "clustering_time") rdd.where("key = 1").limit(10).collect rdd.where("key = 1").take(10).collect val rdd = ssc.cassandraTable[(Int,DateTime,String)]("stats", "clustering_time") .where("key = 1").withAscOrder.collect val rdd = ssc.cassandraTable[(Int,DateTime,String)]("stats", "clustering_time") .where("key = 1").withDescOrder.collect

- 62. Cassandra User Defined Types CREATE TYPE address ( street text, city text, zip_code int, country text, cross_streets set<text> ); UDT = Your Custom Field Type In Cassandra

- 63. Cassandra UDT’s With JSON { "productId": 2, "name": "Kitchen Table", "price": 249.99, "description" : "Rectangular table with oak finish", "dimensions": { "units": "inches", "length": 50.0, "width": 66.0, "height": 32 }, "categories": { { "category" : "Home Furnishings" { "catalogPage": 45, "url": "/home/furnishings" }, { "category" : "Kitchen Furnishings" { "catalogPage": 108, "url": "/kitchen/furnishings" } } } CREATE TYPE dimensions ( units text, length float, width float, height float ); CREATE TYPE category ( catalogPage int, url text ); CREATE TABLE product ( productId int, name text, price float, description text, dimensions frozen <dimensions>, categories map <text, frozen <category>>, PRIMARY KEY (productId) );

- 64. ©2014 DataStax Confidential. Do not distribute without consent. Composable Pipelines With Spark, Kafka & Cassandra 64

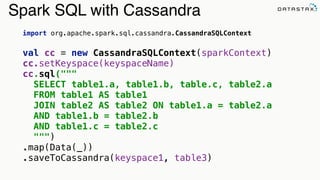

- 65. Spark SQL with Cassandra import org.apache.spark.sql.cassandra.CassandraSQLContext val cc = new CassandraSQLContext(sparkContext) cc.setKeyspace(keyspaceName) cc.sql(""" SELECT table1.a, table1.b, table.c, table2.a FROM table1 AS table1 JOIN table2 AS table2 ON table1.a = table2.a AND table1.b = table2.b AND table1.c = table2.c """) .map(Data(_)) .saveToCassandra(keyspace1, table3)

- 66. Cross Keyspaces (Databases) val schemaRdd1 = cassandraSqlContext.sql(""" INSERT INTO keyspace2.table3 SELECT table2.a, table2.b, table2.c FROM keyspace1.table2 as table2""") val schemaRdd2 = cassandraSqlContext.sql(""" SELECT table3.a, table3.b, table3.c FROM keyspace2.table3 as table3""") val schemaRdd3 = cassandraSqlContext.sql(""" SELECT test1.a, test1.b, test1.c, test2.a FROM database1.test1 AS test1 JOIN database2.test2 AS test2 ON test1.a = test2.a AND test1.b = test2.b AND test1.c = test2.c""")

- 67. val sql = new SQLContext(sparkContext) val json = Seq( """{"user":"helena","commits":98, "month":3, "year":2015}""", """{"user":"jacek-lewandowski", "commits":72, "month":3, "year":2015}""", """{"user":"pkolaczk", "commits":42, "month":3, "year":2015}""") // write sql.jsonRDD(json) .map(CommitStats(_)) .flatMap(compute) .saveToCassandra("stats","monthly_commits") // read val rdd = sc.cassandraTable[MonthlyCommits]("stats","monthly_commits") cqlsh> CREATE TABLE github_stats.commits_aggr(user VARCHAR PRIMARY KEY, commits INT…); Spark SQL with Cassandra & JSON

- 68. Analytic Analytic Search Spark Streaming, Kafka, C* and JSON cqlsh> select * from github_stats.commits_aggr; user | commits | month | year -------------------+---------+-------+------ pkolaczk | 42 | 3 | 2015 jacek-lewandowski | 43 | 3 | 2015 helena | 98 | 3 | 2015 (3 rows) KafkaUtils.createStream[String, String, StringDecoder, StringDecoder]( ssc, kafkaParams, topicMap, StorageLevel.MEMORY_ONLY) .map { case (_,json) => JsonParser.parse(json).extract[MonthlyCommits]} .saveToCassandra("github_stats","commits_aggr")

- 69. Spark Streaming, Kafka & Cassandra sparkConf.set("spark.cassandra.connection.host", "10.20.3.45") val streamingContext = new StreamingContext(conf, Seconds(30)) KafkaUtils.createStream[String, String, StringDecoder, StringDecoder]( streamingContext, kafkaParams, topicMap, StorageLevel.MEMORY_ONLY) .map(_._2) .countByValue() .saveToCassandra("my_keyspace","wordcount")

- 70. Spark Streaming, Twitter & Cassandra /** Cassandra is doing the sorting for you here. */ TwitterUtils.createStream( ssc, auth, tags, StorageLevel.MEMORY_ONLY_SER_2) .flatMap(_.getText.toLowerCase.split("""s+""")) .filter(tags.contains(_)) .countByValueAndWindow(Seconds(5), Seconds(5)) .transform((rdd, time) => rdd.map { case (term, count) => (term, count, now(time))}) .saveToCassandra(keyspace, table) CREATE TABLE IF NOT EXISTS keyspace.table ( topic text, interval text, mentions counter, PRIMARY KEY(topic, interval) ) WITH CLUSTERING ORDER BY (interval DESC)

- 71. Streaming From Kafka, R/W Cassandra val ssc = new StreamingContext(conf, Seconds(30)) val stream = KafkaUtils.createStream[K, V, KDecoder, VDecoder]( ssc, kafkaParams, topicMap, StorageLevel.MEMORY_ONLY) stream.flatMap { detected => ssc.cassandraTable[AdversaryAttack]("behavior_ks", "observed") .where("adversary = ? and ip = ? and attackType = ?", detected.adversary, detected.originIp, detected.attackType) .collect }.saveToCassandra("profiling_ks", "adversary_profiles")

- 72. Training Data Feature Extraction Model Training Model Testing Test Data Your Data Extract Data To Analyze Train your model to predict Spark Streaming ML, Kafka & Cassandra

- 73. val ssc = new StreamingContext(new SparkConf()…, Seconds(5) val testData = ssc.cassandraTable[String](keyspace,table).map(LabeledPoint.parse) val trainingStream = KafkaUtils.createStream[K, V, KDecoder, VDecoder]( ssc, kafkaParams, topicMap, StorageLevel.MEMORY_ONLY) .map(_._2).map(LabeledPoint.parse) trainingStream.saveToCassandra("ml_keyspace", “raw_training_data") val model = new StreamingLinearRegressionWithSGD() .setInitialWeights(Vectors.dense(weights)) .trainOn(trainingStream) //Making predictions on testData model .predictOnValues(testData.map(lp => (lp.label, lp.features))) .saveToCassandra("ml_keyspace", "predictions") Spark Streaming ML, Kafka & C*

- 74. Timeseries Data Application • Global sensors & satellites collect data • Cassandra stores in sequence • Application reads in sequence Apache Cassandra

- 75. Data Analysis Application predictive modelling Apache Cassandra

- 76. Data model should look like your queries

- 77. • Store raw data per ID • Store time series data in order: most recent to oldest • Compute and store aggregate data in the stream • Set TTLs on historic data • Get data by ID • Get data for a single date and time • Get data for a window of time • Compute, store and retrieve daily, monthly, annual aggregations Design Data Model to support queries Queries I Need

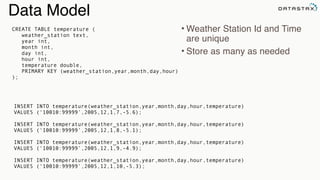

- 78. Data Model • Weather Station Id and Time are unique • Store as many as needed CREATE TABLE temperature ( weather_station text, year int, month int, day int, hour int, temperature double, PRIMARY KEY (weather_station,year,month,day,hour) ); INSERT INTO temperature(weather_station,year,month,day,hour,temperature) VALUES (‘10010:99999’,2005,12,1,7,-5.6); INSERT INTO temperature(weather_station,year,month,day,hour,temperature) VALUES (‘10010:99999’,2005,12,1,8,-5.1); INSERT INTO temperature(weather_station,year,month,day,hour,temperature) VALUES (‘10010:99999’,2005,12,1,9,-4.9); INSERT INTO temperature(weather_station,year,month,day,hour,temperature) VALUES (‘10010:99999’,2005,12,1,10,-5.3);

- 80. class KafkaProducerActor[K, V](config: ProducerConfig) extends Actor { override val supervisorStrategy = OneForOneStrategy(maxNrOfRetries = 10, withinTimeRange = 1.minute) { case _: ActorInitializationException => Stop case _: FailedToSendMessageException => Restart case _: ProducerClosedException => Restart case _: NoBrokersForPartitionException => Escalate case _: KafkaException => Escalate case _: Exception => Escalate } private val producer = new KafkaProducer[K, V](producerConfig) override def postStop(): Unit = producer.close() def receive = { case e: KafkaMessageEnvelope[K,V] => producer.send(e) } } Kafka Producer as Akka Actor

- 81. class HttpReceiverActor(kafka: ActorRef) extends Actor with ActorLogging { implicit val materializer = FlowMaterializer() IO(Http) ! Http.Bind(HttpHost, HttpPort) val requestHandler: HttpRequest => HttpResponse = { case HttpRequest(POST, Uri.Path("/weather/v1/hourly-weather"), headers, entity, _) => HttpSource(headers,entity).collect { case s: HeaderSource => for(s <- source.extract) kafka ! KafkaMessageEnvelope[String, String](topic, key, fs.data:_*) HttpResponse(200, entity = HttpEntity(MediaTypes.`text/html`, s"POST $entity")) } getOrElse HttpResponse(404, entity = s"Unsupported request") } def receive : Actor.Receive = { case Http.ServerBinding(localAddress, stream) => Source(stream).foreach({ case Http.IncomingConnection(remoteAddress, requestProducer, responseConsumer) => log.info("Accepted new connection from {}.", remoteAddress) Source(requestProducer).map(requestHandler).to(Sink(responseConsumer)).run() }) }} Akka Actor as REST Endpoint

- 82. class HttpNodeGuardian extends ClusterAwareNodeGuardianActor { val router = context.actorOf( BalancingPool(PoolSize).props(Props( new KafkaPublisherActor(KafkaHosts, KafkaBatchSendSize)))) Cluster(context.system) registerOnMemberUp { val router = context.actorOf( BalancingPool(PoolSize).props(Props( new HttpReceiverActor(KafkaHosts, KafkaBatchSendSize)))) } def initialized: Actor.Receive = { … } } Akka: Load-Balanced Kafka Work

- 83. Store raw data on ingestion

- 84. val kafkaStream = KafkaUtils.createStream[K, V, KDecoder, VDecoder] (ssc, kafkaParams, topicMap, StorageLevel.DISK_ONLY_2) .map(transform) .map(RawWeatherData(_)) /** Saves the raw data to Cassandra. */ kafkaStream.saveToCassandra(keyspace, raw_ws_data) Store Raw Data on Ingestion To Cassandra From Kafka Stream /** Now proceed with computations from the same stream.. */ kafkaStream… Now we can replay: on failure, for later computation, etc

- 85. CREATE TABLE weather.raw_data ( wsid text, year int, month int, day int, hour int, temperature double, dewpoint double, pressure double, wind_direction int, wind_speed double, one_hour_precip PRIMARY KEY ((wsid), year, month, day, hour) ) WITH CLUSTERING ORDER BY (year DESC, month DESC, day DESC, hour DESC); CREATE TABLE daily_aggregate_precip ( wsid text, year int, month int, day int, precipitation counter, PRIMARY KEY ((wsid), year, month, day) ) WITH CLUSTERING ORDER BY (year DESC, month DESC, day DESC); Our Data Model Again…

- 86. Gets the partition key: Data Locality Spark C* Connector feeds this to Spark Cassandra Counter column in our schema, no expensive `reduceByKey` needed. Simply let C* do it: not expensive and fast. Efficient Stream Computation val kafkaStream = KafkaUtils.createStream[K, V, KDecoder, VDecoder] (ssc, kafkaParams, topicMap, StorageLevel.DISK_ONLY_2) .map(transform) .map(RawWeatherData(_)) kafkaStream.saveToCassandra(keyspace, raw_ws_data) /** Per `wsid` and timestamp, aggregates hourly pricip by day in the stream. */ kafkaStream.map { weather => (weather.wsid, weather.year, weather.month, weather.day, weather.oneHourPrecip) }.saveToCassandra(keyspace, daily_precipitation_aggregations)

- 87. class TemperatureActor(sc: SparkContext, settings: WeatherSettings) extends AggregationActor { import akka.pattern.pipe def receive: Actor.Receive = { case e: GetMonthlyHiLowTemperature => highLow(e, sender) } def highLow(e: GetMonthlyHiLowTemperature, requester: ActorRef): Unit = sc.cassandraTable[DailyTemperature](keyspace, daily_temperature_aggr) .where("wsid = ? AND year = ? AND month = ?", e.wsid, e.year, e.month) .collectAsync() .map(MonthlyTemperature(_, e.wsid, e.year, e.month)) pipeTo requester } C* data is automatically sorted by most recent - due to our data model. Additional Spark or collection sort not needed.

- 89. ©2014 DataStax Confidential. Do not distribute without consent. 89 @helenaedelson github.com/helena slideshare.net/helenaedelson

- 90. Resources Spark Cassandra Connector github.com/datastax/spark-cassandra-connector github.com/killrweather/killrweather groups.google.com/a/lists.datastax.com/forum/#!forum/spark-connector-user Apache Spark spark.apache.org Apache Cassandra cassandra.apache.org Apache Kafka kafka.apache.org Akka akka.io Analytic Analytic

- 91. Thanks for listening! Cassandra Summit SEPTEMBER 22 -‐ 24, 2015 | Santa Clara Convention Center, Santa Clara, CA 3,000 attendees in 2014

with Cassandra {

PailSource.source[Search](rootpath, structure, directories).read

.mapTo('pailItem -> 'engines) { e: Search ⇒ results(e) }

.filter('engines) { e: String ⇒ e.nonEmpty }

.groupBy('engines) { _.size('count).sortBy('engines) }

.groupBy('engines) { _.sortedReverseTake[(String, Long)](('engines, 'count) -> 'tcount, k) }

.flatMapTo('tcount -> ('key, 'engine, 'topCount)) { t: List[(String, Long)] ⇒

t map { case (k, v) ⇒ (jobKey, k, v) }}

.write(CassandraSource(connection, "top_searches", Scheme(‘key, ('engine, ‘topCount))))

}](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-3-320.jpg)

![Apache Spark - Easy to Use API

Returns the top (k) highest temps for any location in the year

def topK(aggregate: Seq[Double]): Seq[Double] =

sc.parallelize(aggregate).top(k).collect

Returns the top (k) highest temps … in a Future

def topK(aggregate: Seq[Double]): Future[Seq[Double]] =

sc.parallelize(aggregate).top(k).collectAsync

Analytic

Analytic

Search](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-23-320.jpg)

![Analytic

Analytic

Search

Collection To RDD

scala> val data = Array(1, 2, 3, 4, 5)

data: Array[Int] = Array(1, 2, 3, 4, 5)

scala> val distributedData = sc.parallelize(data)

distributedData: spark.RDD[Int] =

spark.ParallelCollection@10d13e3e](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-26-320.jpg)

![CREATE TABLE users (

username varchar,

firstname varchar,

lastname varchar,

email list<varchar>,

password varchar,

created_date timestamp,

PRIMARY KEY (username)

);

INSERT INTO users (username, firstname, lastname,

email, password, created_date)

VALUES ('hedelson','Helena','Edelson',

[‘helena.edelson@datastax.com'],'ba27e03fd95e507daf2937c937d499ab','2014-11-15 13:50:00’)

IF NOT EXISTS;

• Familiar syntax

• Many Tools & Drivers

• Many Languages

• Friendly to programmers

• Paxos for locking

CQL - Easy](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-49-320.jpg)

)

}

streamingContext.union(multipleStreams)

.map { httpRequest => TimelineRequestEvent(httpRequest)}

.saveToCassandra("requests_ks", "timeline")

A record of every event, in order in which it happened, per URL:

CREATE TABLE IF NOT EXISTS requests_ks.timeline (

timesegment bigint, url text, t_uuid timeuuid, method text, headers map <text, text>, body text,

PRIMARY KEY ((url, timesegment) , t_uuid)

);

timeuuid protects from simultaneous events over-writing one another.

timesegment protects from writing unbounded partitions.](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-51-320.jpg)

![Read From C* to Spark

val

rdd

=

sc.cassandraTable("github",

"commits")

.select("user","count","year","month")

.where("commits

>=

?

and

year

=

?",

1000,

2015)

CassandraRDD[CassandraRow]

Keyspace Table

Server-Side Column

and Row Filtering

SparkContext](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-58-320.jpg)

.where("user

=

?

and

project_name

=

?

and

year

=

?",

"helena",

"spark-‐cassandra-‐connector",

2015)

CassandraRow Keyspace TableStreamingContext

Rows: Custom Objects](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-59-320.jpg)

.select("cluster_id","time",

"cluster_name")

.where("time

>

?

and

time

<

?",

"2014-‐07-‐12

20:00:01",

"2014-‐07-‐12

20:00:03”)

val

keyValuesPairsRdd

=

sc.cassandraTable[(Key,Value)](keyspace,

table)](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-60-320.jpg)

rdd.where("key = 1").limit(10).collect

rdd.where("key = 1").take(10).collect

val

rdd

=

ssc.cassandraTable[(Int,DateTime,String)]("stats",

"clustering_time")

.where("key

=

1").withAscOrder.collect

val

rdd

=

ssc.cassandraTable[(Int,DateTime,String)]("stats",

"clustering_time")

.where("key

=

1").withDescOrder.collect](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-61-320.jpg)

cqlsh>

CREATE

TABLE

github_stats.commits_aggr(user

VARCHAR

PRIMARY

KEY,

commits

INT…);

Spark SQL with Cassandra & JSON](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-67-320.jpg)

.map { case (_,json) => JsonParser.parse(json).extract[MonthlyCommits]}

.saveToCassandra("github_stats","commits_aggr")](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-68-320.jpg)

.map(_._2)

.countByValue()

.saveToCassandra("my_keyspace","wordcount")](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-69-320.jpg)

stream.flatMap { detected =>

ssc.cassandraTable[AdversaryAttack]("behavior_ks",

"observed")

.where("adversary

=

?

and

ip

=

?

and

attackType

=

?",

detected.adversary,

detected.originIp,

detected.attackType)

.collect

}.saveToCassandra("profiling_ks",

"adversary_profiles")](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-71-320.jpg)

.map(LabeledPoint.parse)

val trainingStream = KafkaUtils.createStream[K, V, KDecoder, VDecoder](

ssc, kafkaParams, topicMap, StorageLevel.MEMORY_ONLY)

.map(_._2).map(LabeledPoint.parse)

trainingStream.saveToCassandra("ml_keyspace", “raw_training_data")

val model = new StreamingLinearRegressionWithSGD()

.setInitialWeights(Vectors.dense(weights))

.trainOn(trainingStream)

//Making predictions on testData

model

.predictOnValues(testData.map(lp => (lp.label, lp.features)))

.saveToCassandra("ml_keyspace", "predictions")

Spark Streaming ML, Kafka & C*](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-73-320.jpg)

extends Actor {

override val supervisorStrategy =

OneForOneStrategy(maxNrOfRetries = 10, withinTimeRange = 1.minute) {

case _: ActorInitializationException => Stop

case _: FailedToSendMessageException => Restart

case _: ProducerClosedException => Restart

case _: NoBrokersForPartitionException => Escalate

case _: KafkaException => Escalate

case _: Exception => Escalate

}

private val producer = new KafkaProducer[K, V](producerConfig)

override def postStop(): Unit = producer.close()

def receive = {

case e: KafkaMessageEnvelope[K,V] => producer.send(e)

}

}

Kafka Producer as Akka Actor](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-80-320.jpg)

HttpResponse(200, entity = HttpEntity(MediaTypes.`text/html`, s"POST $entity"))

}

getOrElse HttpResponse(404, entity = s"Unsupported request")

}

def receive : Actor.Receive = {

case Http.ServerBinding(localAddress, stream) =>

Source(stream).foreach({

case Http.IncomingConnection(remoteAddress, requestProducer, responseConsumer) =>

log.info("Accepted new connection from {}.", remoteAddress)

Source(requestProducer).map(requestHandler).to(Sink(responseConsumer)).run()

})

}}

Akka Actor as REST Endpoint](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-81-320.jpg)

![val kafkaStream = KafkaUtils.createStream[K, V, KDecoder, VDecoder]

(ssc, kafkaParams, topicMap, StorageLevel.DISK_ONLY_2)

.map(transform)

.map(RawWeatherData(_))

/** Saves the raw data to Cassandra. */

kafkaStream.saveToCassandra(keyspace, raw_ws_data)

Store Raw Data on Ingestion

To Cassandra From Kafka Stream

/** Now proceed with computations from the same stream.. */

kafkaStream…

Now we can replay: on failure, for later computation, etc](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-84-320.jpg)

![Gets the partition key: Data Locality

Spark C* Connector feeds this to Spark

Cassandra Counter column in our schema,

no expensive `reduceByKey` needed. Simply

let C* do it: not expensive and fast.

Efficient Stream Computation

val kafkaStream = KafkaUtils.createStream[K, V, KDecoder, VDecoder]

(ssc, kafkaParams, topicMap, StorageLevel.DISK_ONLY_2)

.map(transform)

.map(RawWeatherData(_))

kafkaStream.saveToCassandra(keyspace, raw_ws_data)

/** Per `wsid` and timestamp, aggregates hourly pricip by day in the stream. */

kafkaStream.map { weather =>

(weather.wsid, weather.year, weather.month, weather.day, weather.oneHourPrecip)

}.saveToCassandra(keyspace, daily_precipitation_aggregations)](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-86-320.jpg)

.where("wsid = ? AND year = ? AND month = ?", e.wsid, e.year, e.month)

.collectAsync()

.map(MonthlyTemperature(_, e.wsid, e.year, e.month)) pipeTo requester

}

C* data is automatically sorted by most recent - due to our data model.

Additional Spark or collection sort not needed.](https://image.slidesharecdn.com/lambda-architecture-cassandra-spark-kafka-150408100223-conversion-gate01/85/Lambda-Architecture-with-Spark-Spark-Streaming-Kafka-Cassandra-Akka-and-Scala-87-320.jpg)